If false (the default), errors are silently ignored. The optional argument strict_parsing is a flag indicating what to do with Value indicates that blank values are to be ignored and treated as if they were Indicates that blanks should be retained as blank strings. Values in percent-encoded queries should be treated as blank strings. The optional argument keep_blank_values is a flag indicating whether blank Values are lists of values for each name. The dictionary keys are the unique query variable names and the Parse a query string given as a string argument (data of typeĪpplication/x-www-form-urlencoded). parse_qs ( qs, keep_blank_values = False, strict_parsing = False, encoding = 'utf-8', errors = 'replace', max_num_fields = None, separator = '&' ) ¶ The _replace() method will return a new ParseResult object replacing specifiedĬhanged in version 3.8: Characters that affect netloc parsing under NFKC normalization will If the URL isĭecomposed before parsing, no error will be raised.Īs is the case with all named tuples, the subclass has a few additional methodsĪnd attributes that are particularly useful. Normalization (as used by the IDNA encoding) into any of /, ?, Unmatched square brackets in the netloc attribute will raise aĬharacters in the netloc attribute that decompose under NFKC Structured Parse Results for more information on the result object.

Reading the port attribute will raise a ValueError ifĪn invalid port is specified in the URL. The return value is a named tuple, which means that its items canīe accessed by index or as named attributes, which are: Or query component, and fragment is set to the empty string in Instead, they are parsed as part of the path, parameters

If the allow_fragments argument is false, fragment identifiers are not (text or bytes) as urlstring, except that the default value '' isĪlways allowed, and is automatically converted to b'' if appropriate. Used only if the URL does not specify one. The scheme argument gives the default addressing scheme, to be > from urllib.parse import urlparse > urlparse ( '//%7E guido/Python.html' ) ParseResult(scheme='', netloc='path='/%7Eguido/Python.html', params='', query='', fragment='') > urlparse ( '%7E guido/Python.html' ) ParseResult(scheme='', netloc='', path='params='', query='', fragment='') > urlparse ( 'help/Python.html' ) ParseResult(scheme='', netloc='', path='help/Python.html', params='', query='', fragment='') Result, except for a leading slash in the path component, which is retained if The delimiters as shown above are not part of the Into smaller parts (for example, the network location is a single string), and %Įscapes are not expanded. Scheme://netloc/path parameters?query#fragment.Įach tuple item is a string, possibly empty. ThisĬorresponds to the general structure of a URL: Parse a URL into six components, returning a 6-item named tuple. urlparse ( urlstring, scheme = '', allow_fragments = True ) ¶ Or on combining URL components into a URL string. The URL parsing functions focus on splitting a URL string into its components, The urllib.parse module defines functions that fall into two broadĬategories: URL parsing and URL quoting. Sftp, shttp, sip, sips, snews, svn, svn+ssh, News, nntp, prospero, rsync, rtsp, rtsps, rtspu,

Gopher, hdl, http, https, imap, mailto, mms, It supports the following URL schemes: file, ftp, The module has been designed to match the internet RFC on Relative Uniform

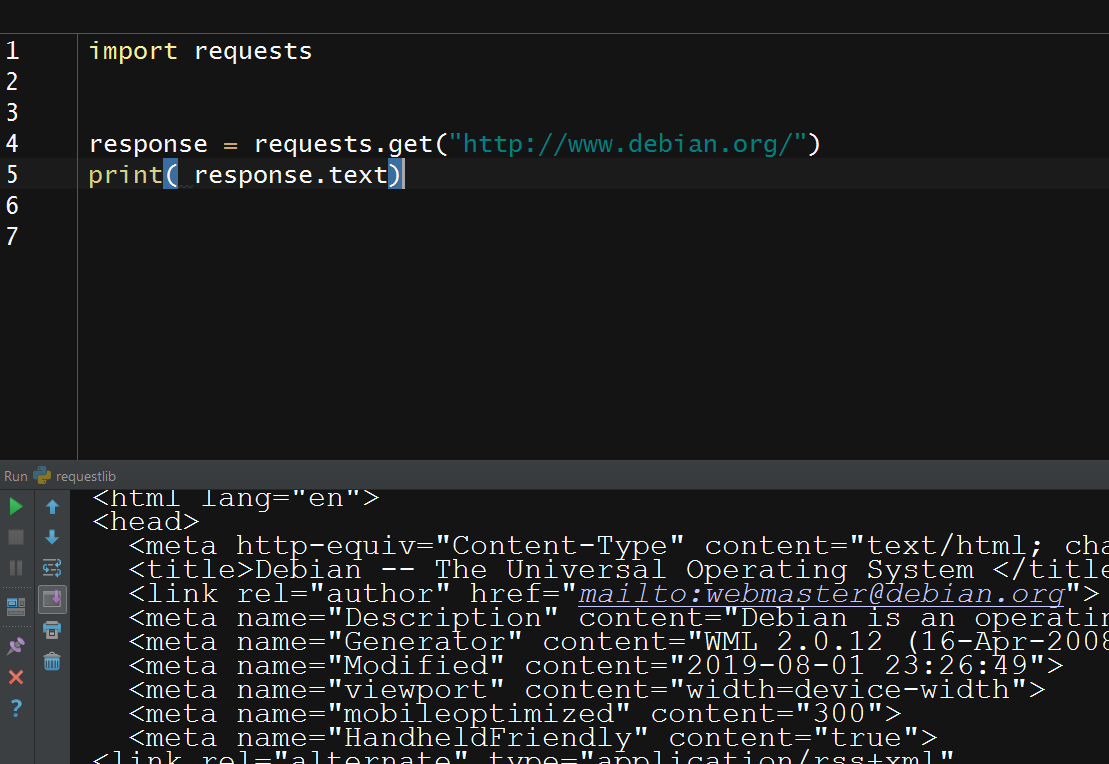

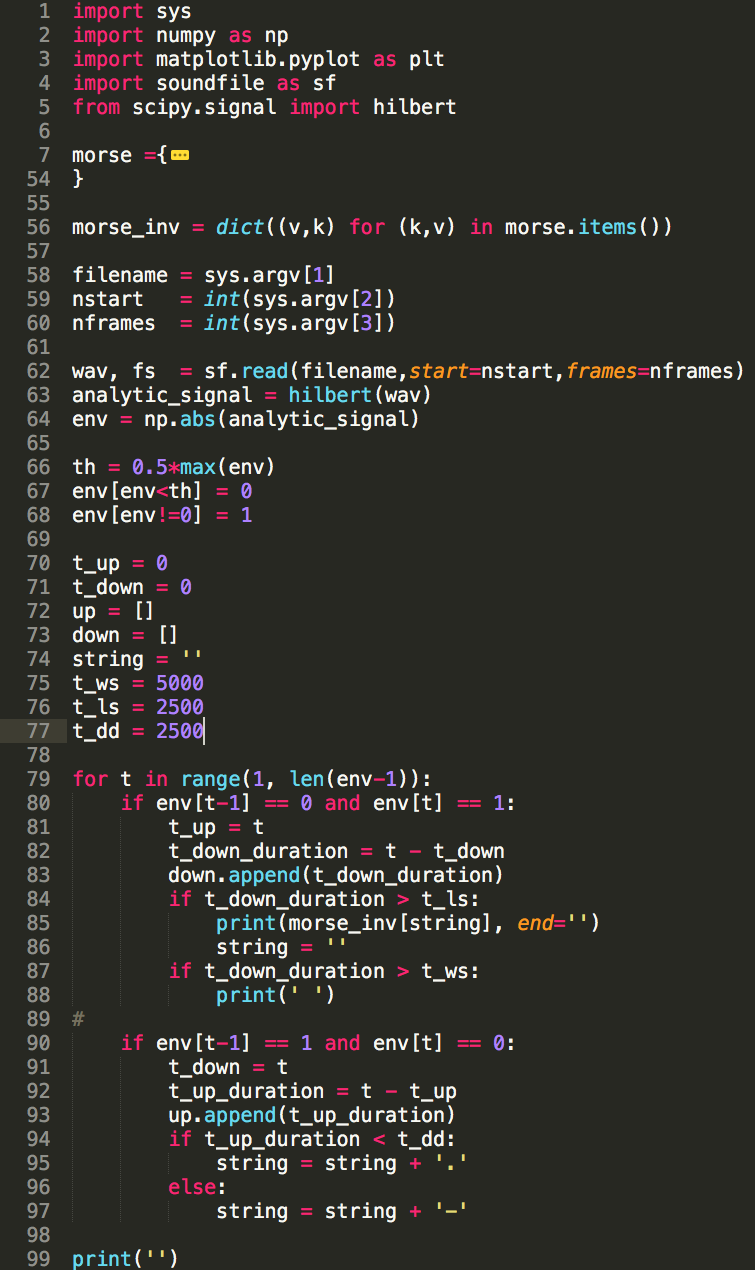

Strings up in components (addressing scheme, network location, path etc.), toĬombine the components back into a URL string, and to convert a “relative URL” This module defines a standard interface to break Uniform Resource Locator (URL) So basically using a simple while loop to iterate the characters, add any character's byte as is if it is not a percent sign, increment index by one, else add the byte following the percent sign and increment index by three, accumulate the bytes and decoding them should work - Parse URLs into components ¶ URL encoding is pretty straight forward, just a percent sign followed by the hexadecimal digits of the byte values corresponding to the codepoints of illegal characters. I know this is an old question, but I stumbled upon this via Google search and found that no one has proposed a solution with only built-in features.īasically a url string can only contain these characters: A-Z, a-z, 0-9,.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed